Lean System Design: URL Shortener

Hey!

As I wrote in the previous post, I became really interested in Lean System Design – real-life system design, not forced to satisfy Google-scale requirements.

Today, I want to review a classic example of a system design interview question – URL Shortener.

The task is simple – we need to design an application that converts long URLs to short ones, which can then be used for redirects to the original URLs. The goal is to have a short URL that can be easily shared as text or a readable QR code.

Common examples of such services are Bitly and TinyURL.

So we have just two user cases here: creating a tiny URL and opening a tiny URL.

And of course, we have non-functional requirements. Let's use a popular book by Alex Xu, System Design Interview, as a reference for what these requirements are and the canonical expected interview solution for this task.

Requirements

Interview requirements:

- 100 million URLs generated per day

- The resulting URLs should be as short as possible

- High availability, scalability, etc.

And we have some assumptions we can make out of these requirements to have a better understanding of numbers:

- 100 million URLs per day is about 1160 writes per second

- We assume the read-to-write ratio is 10:1, so we'll have 11600 reads per second

- We assume our service will run for 10 years, so we'll have 365 billion records, and having an average URL length of 100 chars, we'll need 365 TB of storage

OK, looks solid.

But are these requirements and assumptions realistic?

Let's see what we know about the biggest players on the market – Bitly and TinyURL.

- Bitly, on their main page, says they have 256M Links & QR Codes created monthly

- Bitly in 2022 had 5.7M monthly active users and 500K+ customers, according to their press release

- Bitly also says in the same press release that they have "10 Billion+ Clicks and QR Code Scans Every Month"

- TinyURL says it's trusted by ~3.9M users

- TinyURL has created 30 billion short URLs over 23 years

What can we see here? Well, first of all, the biggest player on the market – Bitly – has just 256M URLs created monthly. That's only 8 million URLs generated per day. And they also have only 330 million redirects per day.

And these numbers convert to:

- 93 writes per second

- 3820 reads per second

So, the biggest player on the market, whose scale, from a business perspective, I believe is problematic to achieve, has a noticeably smaller load than is required for the task. If you want to build something similar in your company, your scale will probably be even smaller than that.

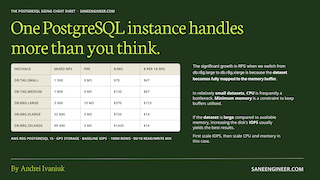

How much hardware do you actually need for this kind of load? I benchmarked PostgreSQL across 8 AWS instance types – spoiler: the cheapest one already handles more than Bitly. Subscribe and get the cheat sheet.

Interview System Design

How can we design such a system?

There are two classical solutions to this, both described in Alex Xu's book:

- Use hashing for long URLs, insert them into a relational DB, and retry in case of collisions (with appending the hashing result again and again if needed)

- Use a distributed unique ID generator, and convert these IDs to Base62

Both of these options use a fixed-length ID to support the maximum number of URLs that can be generated (over 10 years) and to keep short URLs short.

I agree that both will work, and they are good answers for the system design interview nowadays. But what is not perfect in these options?

Hashing

The known issue with this option is that you need to check whether the hash is already in use. To do this efficiently, you will need an index on your DB. The ideal index here is something with a bloom filter – a probabilistic data structure which allows you to check if some element is definitely not in the set (or there is a chance it is in the set). However, a B-tree index will obviously work here as well.

So, we can speed up the check if the hash is already in use using the index. But at the same time, DB will need to maintain this index, which will decrease insert speed.

Distributed Unique ID Generator

The Distributed Unique ID Generator option isn't that bad. We want to use an ID generator here that reduces the chance of ID collisions in a distributed system to zero or nearly zero. One option is to use Snowflake ID.

With this option, you don't need to pay a lot of attention to collisions or maintaining your index. And this is a good direction if you really need to scale. But let's see what we can achieve without going distributed.

Real-World Design

As we saw, in the real world, we need to support just up to 100 writes per second and up to 4k reads per second. Both numbers look more than achievable on a single machine with a canonical DB.

I have vibecoded a simple solution in Go that uses either SQLite or PostgreSQL to demonstrate the possibilities of a single machine here.

We will not use hashing; we will use only a primary auto-incremental ID, which is encoded to Base64URL. So, this is a non-distributed version of the canonical solution #2.

To test the solution, I used my home server with a NAS motherboard and an Intel Celeron N5105 (which is very far from high-tier hardware, and it cost me like 150 EUR on AliExpress) and some HDD (not even an SSD).

I've also simulated a pre-filled DB with 3 billion records, which is the expected DB size after 1 year of service usage.

$ ./dbgen -type=sqlite -count=3000000000

Total records: 3,000,000,000

Total time: 1h26m48s

Avg rate: 576,035 records/s

File size: 438.66 GB

And, if we check the results, they will be more than acceptable.

$ ./loadtest -duration=60s -concurrency=50

=== URL Creation ===

Total Requests: 152412

Successful: 152412

Failed: 0

RPS: 2540.20 req/s

=== Redirect ===

Total Requests: 952170

Successful: 952170

Failed: 0

RPS: 15869.50 req/s

So, we have like 2540 writes per second and 15869 reads per second, which is enough not only for our realistic requirements, but also for the original interview requirements. And that's on my cheap, bizarre hardware.

All the scripts for data geenration and load testing are available in the repo, so you can make these experiments yourself: GitHub Repo.

Availability

As we use a single machine setup, we have perfect consistency, but what about availability? If our machine goes down, our service will not be operational until it is back online.

But how bad is that?

If we analyse Bitly SLAs, we'll see that on their Enterprise plan (they don't give any guarantees on other plans), they promise 99.9% availability. That's 43m 50s of downtime per month. That's quite a lot!

There are two main reasons why you usually may have downtime in your system: deployments and incidents.

Deployments are usually straightforward, and you can replace binaries on the machine quite fast. If you don't have a long warm-up for your service, it's a matter of seconds. And I can't find any reason why the described URL shortener should have any warm-up. The example that I demonstrate and test doesn't use any caching or anything besides a lightweight wrapper around a DB.

It's more problematic when you want to migrate data during the downtime, but you probably don't want to. Even in a non-distributed world, it's better to avoid incompatible changes, and this shouldn't really be a big problem if you understand how to make compatible changes. And with compatible changes, you can migrate your data while your system is running.

For incidents, it's more complicated. You can never predict whether you will have an incident tomorrow, and you can't control their duration. It may depend heavily on your code quality, your processes, and many other factors. But it's important to remember that going distributed is not a silver bullet. Even with redundancy, you may still experience downtime for other reasons. And some of these reasons might be related to the fact that you went distributed and introduced this redundancy (due to an increased number of moving parts, greater complexity, etc.).

First Steps of Scaling

Still, I agree: making simple, stateless applications that scale horizontally is a cheap way to improve our scalability and availability. It's a step up in complexity, but it is not that bad, since our app is stateless, and this doesn't require any complex distributed connections.

So, let's switch our application from SQLite to PostgreSQL and check the performance.

A lot of cloud providers offer PostgreSQL at a reasonable price and reasonable availability guarantees (like Multi-AZ in AWS), and it provides a lot of functionality to you, so it's usually a good default choice, but technically, you could use any other relational DB.

What we are doing here is delegating the complexity of distributed systems to a well-matched tool that is solid, mature, well-represented on the market, and that gives us what we need. We basically build our system around this technology.

PostgreSQL

In order to test PostgreSQL version, I've used c7i.large EC2 instance, and PostgreSQL instance on db.r8g.large via RDS. I've also used IO2 disk with baseline IOPS for our DB.

The results of the same experiment are the following.

No Multi-AZ case:

$ ./loadtest -url=http://localhost:3000 -duration=60s -concurrency=50

=== URL Creation ===

Total Requests: 954455

Successful: 954455

Failed: 0

RPS: 15907.58 req/s

=== Redirect ===

Total Requests: 1474310

Successful: 1474310

Failed: 0

RPS: 24571.83 req/s

Multi-AZ case:

$ ./loadtest -url=http://localhost:3000 -duration=60s -concurrency=50

=== URL Creation ===

Total Requests: 881595

Successful: 881595

Failed: 0

RPS: 14693.25 req/s

=== Redirect ===

Total Requests: 1575232

Successful: 1575232

Failed: 0

RPS: 26253.87 req/s

This option doesn't use any sharding. It's basically a single DB with an additional stand-by instance to improve availability. Still, it performs well.

The total cost of this AWS solution is approximately USD 750 per month, which should be more than affordable if you want to compete with Bitly. And you can definitely go cheaper by adjusting the hardware to your actual load.

Below are charts showing write and read RPS for:

- PostgreSQL solution hosted on AWS

- SQLite solution hosted on my NAS

- Interview requirements

- Real-world requirements

As you can see, our lean design shows performance that easily satisfies both interview and real-world requirements.

Conclusion

Of course, such classical problems, like URL Shortener, are just an instrument in interviews to provoke further discussions and check how candidates approach system design. But isn't it remarkable that people are trained to answer such kinds of questions, overcomplicating everything?

As you may see, there is a lot of freedom in how to solve that. And you can pick a straightforward solution that will be performant enough to beat the biggest players on the market.

And of course, in some cases, this simple PostgreSQL setup will not be enough, and we'll need to scale further and adopt a different design, probably with some sharding. But how can we check if single-machine Postgres is enough? I've prepared a benchmark and a Cheat Sheet on PostgreSQL performance across different AWS instance types. Subscribe and receive it ⬇️

Subscribe and get my PostgreSQL Sizing Cheat Sheet – RPS, latency, and cost per 1k requests for different instances, and RPS broken down by workload type.

Subscribe

2026-02-10