Lean System Design

Hey!

Over the past year, I became interested in a systematic approach to system design. It appeared to be a quite overused term nowadays – it includes pure and in-depth knowledge about technological systems; it includes how to use them and how to build new systems out of existing ones in real life; and it also includes the "system design interview" area, which is somehow different from the first two points.

Eventually, I've also realised there is a strong push in the community toward building complex distributed systems. This is mainly driven by large tech companies, which need to scale their products to millions and millions of users. So, they require this approach of thinking in their interviews, they popularise it at conferences and in the tools they develop.

The problem is that you probably don't work at Google, and your system will never need to handle Google-scale traffic.

Distributed systems are complex, and applying approaches popularised by big tech companies might be harmful to most other companies. It could be harmful in terms of cost, development velocity, and maintainability.

Over my 13 years in engineering, I've seen many complex distributed systems that engineering teams built over months, only to be decommissioned shortly after release for business reasons. And I've also seen systems that became so unnecessarily complicated that maintaining them took up 80% of engineers' time.

I feel we need to address these concerns with something I'd call Lean System Design.

I want to dive deeper into this topic and share my insights with a broader audience. If you'd like to read more about this and related topics, there is a subscription form at the end of this post 🙂

Real-World Requirements

No matter if you work for a big tech company or not, you need to know numbers in order to make decisions on the solutions you build.

The common issue here is that in big tech, you almost always need proper scalability to support millions of users. And companies outside of big tech frequently don't have internal expertise on what decisions to make. And, following the popularity, they may not choose the best options for their case.

Real-world systems frequently follow requirements similar to these:

- 10 to 1000 RPS, not millions;

- latency and availability targets that aim ordinary users, not some sophisticated SLAs;

- per use-case data correctness guarantees;

- operability and maintainability – it should be easy to diagnose and fix the issues;

- limited infra and people costs.

It doesn't look like we need something very complicated to satisfy this, right? So why do people choose distributed?

Why Distributed?

Distributed systems are complicated because of their nature. When building such systems (and using existing ones), we need to constantly think about consistency and availability. And there is no single "slider" between consistency and availability that you can select some point on. We have a lot of tools to build distributed systems, and each tool is different: it can be configured differently and will behave differently, with its own issues, problems, and trade-offs. And choosing "distributed", we'll need to handle all these complexities and maintain the resulting system.

However, after resolving all these issues, we'll achieve what is never possible with a single-machine system – almost infinite scalability.

There are also other potential benefits frequently associated with building distributed (in a broad sense) systems, such as better team scalability or clearer domain separation. In my opinion, though, all these reasons are not that significant, and shouldn't be considered in the distributed vs non-distributed question. Teams organisation and domain separation just accidentally happened to be the same discussion because of the popularisation of microservices. These aspects may differ significantly even in a non-distributed world, and shouldn't be that tightly linked.

Single Machine

OK, so scalability. It is indeed not possible to achieve the same scalability with a single-machine setup. But what are the limits? Let's check the real-life examples.

Probably, the most popular example is Stack Overflow. In its best years, it had a single database and handled 6k queries per second, running smoothly and delivering low latency. The hardware needed to handle that would now probably cost a couple of thousand USD per month, which is more than affordable for a company of that scale. Also, Stack Overflow is quite large, and most of the systems engineers build will never reach such a scale or load. See more about their architecture.

GitHub Pages is also a noticeable example. Until around 2015, all the GitHub Pages were hosted on a single pair of machines (active + standby) with an nginx config regenerated by a cron job. Straightforward architecture on a single machine that was very efficient for them and supported thousands of requests per second. See more.

These are examples of successful companies that served millions of users while maintaining a simple architecture without large distributed systems.

Why Go Distributed?

We see there might be successful products and systems that run on a single machine or somewhere near that.

But I'm not rejecting distributed systems completely here. So, why should we go distributed?

In my opinion, the main reasons are:

- If you clearly see that you can't achieve the needed scalability on a single machine. To do that, you need to know "numbers" – the requirements for expected load and system growth. And you also need to understand the limits of a single machine and of the software it runs. I'll go deeper into that in my next posts.

- If you see that you can use an existing self-contained and stable distributed system, and you can build your system around it. A common example is databases. People who develop distributed databases have already solved many issues and addressed much of the complexity, and they usually hide some of this complexity inside. So, you, as a user, will only need a small portion of this complexity and delegate everything else to this central system. The problem here is that this "small portion of complexity" is usually still quite big, and people underestimate it.

- If you are doing an interview for a big tech company 🙂

What's Next?

Of course, this is not only about distributed vs. non-distributed. With this post, I'd like to start a series reviewing system design topics through the lens of lean system design.

Next week, I'll pick a typical example of the system and walk through:

- how to estimate rough load without pretending you know the future;

- how to choose availability and consistency targets that match your business;

- and what "good enough" looks like for the first year.

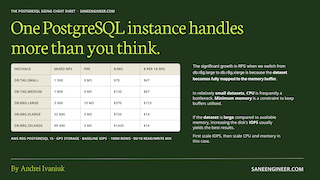

Subscribe and get my PostgreSQL Sizing Cheat Sheet – RPS, latency, and cost per 1k requests for different instances, and RPS broken down by workload type.

Subscribe

2026-01-15